TL;DR:

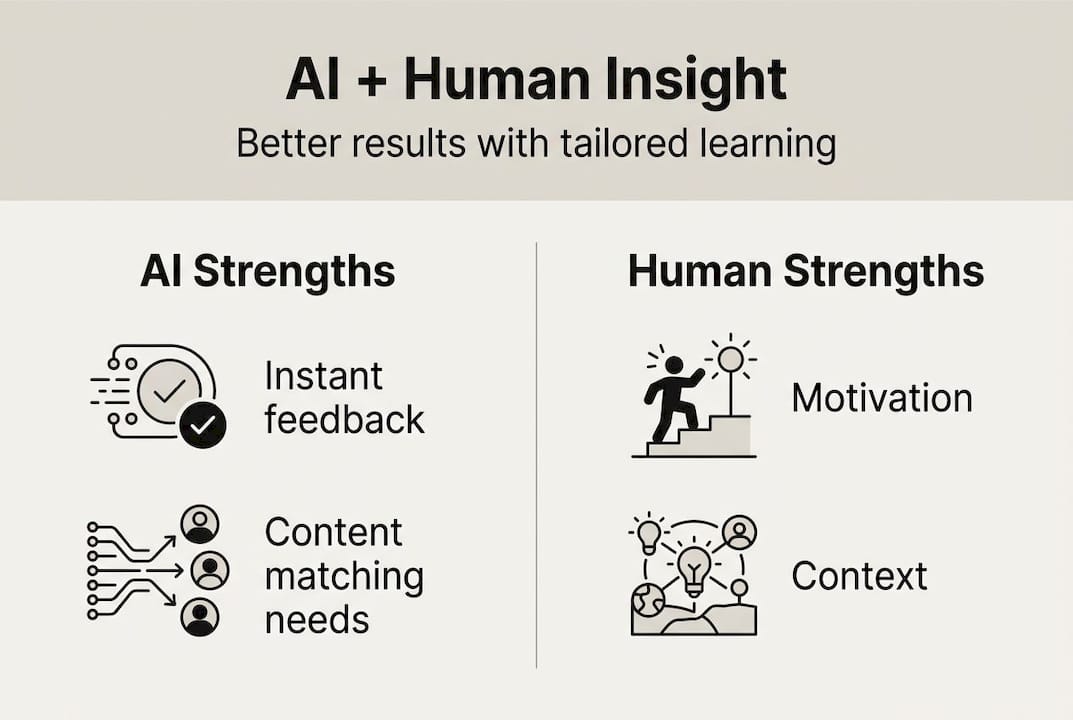

- AI enhances education but cannot replace human motivation, empathy, and ethical guidance.

- Effective learning requires a collaboration model combining AI tools with human oversight and reflection.

- Teacher and parental involvement remain crucial to ensure AI fosters genuine understanding and student growth.

AI is everywhere in education right now. Lesson plans, flashcard generators, essay feedback, revision tools. It feels like technology is finally going to fix learning for good. But here is the uncomfortable truth: AI on its own is not enough. Experts are clear that AI cannot fully replace the motivation, relationships, and ethical guidance that humans provide. The real question is not whether to use AI, but how to combine it with the human insight that makes learning genuinely transformative. This article unpacks exactly why that blend matters and how you can make it work.

Table of Contents

- The limits of AI in education

- AI-human collaboration: Key models and methodologies

- The critical role of human insight in learning

- Making AI and human insight work together in practice

- A new mindset: Why educational innovation needs both AI and empathy

- Find your perfect blend: AI tools with a human touch

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Human insight is essential | AI tools alone cannot personalise or motivate learning as effectively without human support. |

| Best results need collaboration | Blending AI systems and human guidance delivers superior educational outcomes. |

| Models like 'AI Sandwich' matter | Frameworks that keep humans in the loop help avoid stagnant and ineffective AI use. |

| Practical strategies are key | Students and parents should combine intelligent technology with active mentoring for student success. |

The limits of AI in education

AI-powered learning tools are impressive. They can adapt to your pace, surface relevant content, and give instant feedback at two in the morning. That is genuinely useful. But impressive is not the same as sufficient.

Here is what the research actually shows. Adaptive learning platforms rely heavily on data quality, and 73% of them use incomplete data, which leads to stagnant rather than improving outcomes. Think about that. The majority of AI tutoring platforms are working from a partial picture of each student, which means their recommendations are built on shaky foundations.

AI assessments are getting sharper, too. Automated grading now correlates at 0.847 with expert human judgement, which sounds reassuring. But that remaining gap still matters enormously in high-stakes situations. And crucially, unguarded AI use is linked to a 17% drop in grades. Students who lean entirely on AI tools, without human oversight, can actually perform worse.

Why does this happen? A few reasons stand out:

- Lack of context: AI cannot always tell whether a student is confused, discouraged, or simply tired. It responds to inputs, not emotions.

- Data gaps: Without complete learning histories, AI recommendations become generic rather than truly personalised.

- No accountability: Students can manipulate AI tools to skip genuine thinking, which bypasses actual learning entirely.

- Absence of challenge: Truly great learning involves someone pushing you further than you thought you could go. Algorithms rarely do that.

This does not mean AI is useless. Far from it. It means AI works best when it is part of a wider system, not a standalone solution. Explore how personalised success with AI genuinely improves when human input is woven in.

| AI strength | AI limitation |

|---|---|

| Instant, scalable feedback | Cannot read emotional state |

| Personalised content delivery | Relies on incomplete data |

| 24/7 availability | No ethical or motivational guidance |

| Consistent assessment | 17% grade drop without oversight |

AI-human collaboration: Key models and methodologies

Once you understand what AI cannot do alone, the next step is finding frameworks that harness the best of both worlds. Two of the most effective are the AI Sandwich and the human in the loop approach.

The AI Sandwich model works exactly as it sounds. A human sets the context and purpose first. AI then does the heavy lifting: generating content, analysing data, producing a draft. Then a human returns to evaluate, critique, and refine. Human thinking bookends the AI contribution. This is not just a neat metaphor. It is a proven structure for keeping human judgement central while still benefiting from machine efficiency.

The human in the loop methodology is slightly different. Here, humans are embedded throughout the AI process, checking outputs at each stage rather than only at the start and end. This approach is particularly useful for complex, long-form learning projects where errors can compound quickly.

How do these compare in practice?

| Model | Human role | AI role | Best for |

|---|---|---|---|

| AI Sandwich | Frames and reviews | Generates and processes | Essay writing, research projects |

| Human in the loop | Monitors throughout | Executes tasks | Long-term revision programmes |

| Full automation | Minimal | Dominant | Low-stakes drills and practice |

Here is a practical way to apply these approaches:

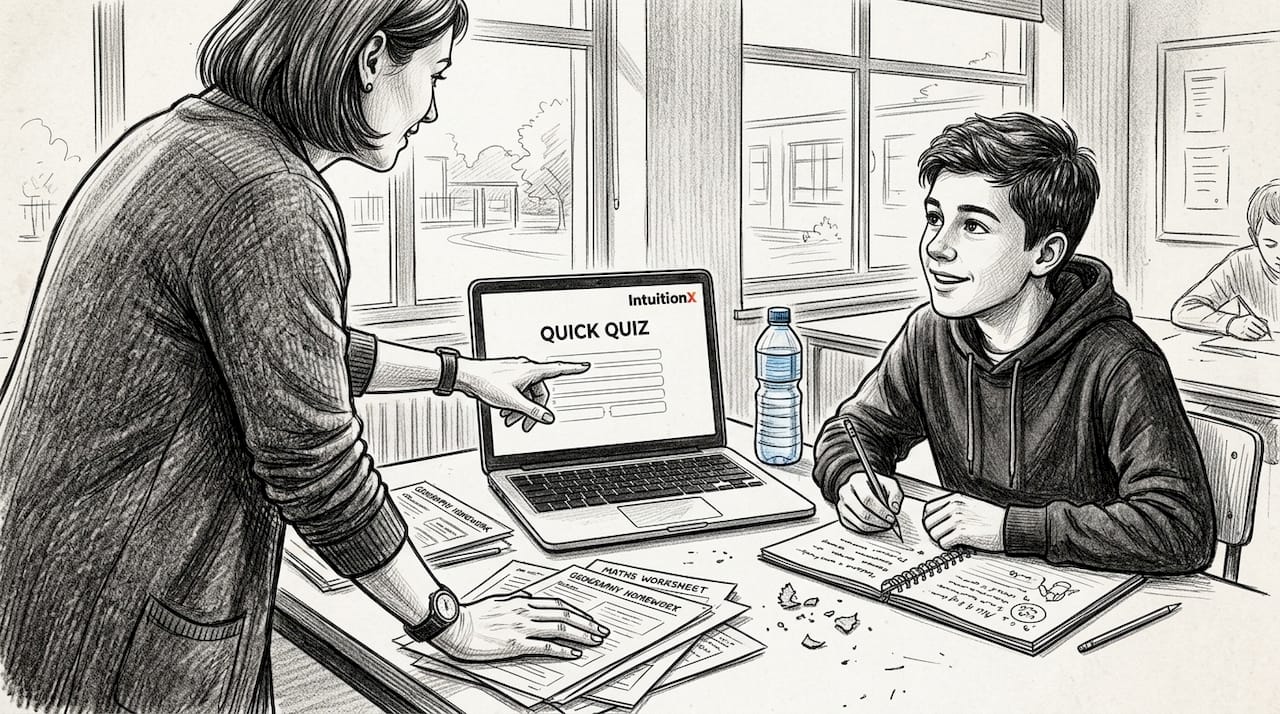

- Set the goal with a human. Before opening any AI tool, decide what you actually want to learn or achieve in this session.

- Let AI do the work. Use your chosen tool to generate explanations, practice questions, or feedback.

- Reflect with a mentor. Discuss what the AI produced. What surprised you? What felt wrong? What needs more depth?

- Revise and go again. Use that human feedback to refine your understanding before your next AI session.

Pro Tip: When using any guide to smarter AI tutoring, always add one human reflection step at the end. Even five minutes of discussing the AI output with a parent, teacher, or mentor can significantly deepen what you retain.

Experts recommend treating AI as an augmentation tool rather than a replacement. Explore top educational AI tools to find options that genuinely support this kind of collaborative approach.

The critical role of human insight in learning

AI can tell a student they got a question wrong. It cannot make them care about getting it right.

That distinction is everything. Human educators and parents bring qualities that no algorithm can replicate, and these qualities are not peripheral extras. They are the engine of real learning.

"AI will not replace teachers, but teachers who use AI will change teaching." The key word is use. Teachers guide, inspire, challenge, and support. AI serves that mission, it does not lead it.

Here are the core human qualities that make the difference:

- Inspiration: A great teacher connects a subject to a student's real life, sparking genuine curiosity rather than just compliance.

- Empathy: Humans notice when a student is struggling emotionally, not just academically. That recognition changes how support is offered.

- Ethical guidance: AI has no moral compass. Humans help students navigate questions of integrity, fairness, and purpose in their studies.

- Relational trust: Students take more risks, ask more questions, and persist longer when they feel safe with the person guiding them. 91% of US students report feeling nervous about asking questions in class, which shows how crucial that trust is.

- Long-term mentoring: A human mentor sees the arc of a student's development across months and years. AI sees the last session.

Research consistently shows better outcomes when humans actively guide AI-driven learning rather than leaving students to navigate it alone. If you are a parent wondering how to stay involved, a practical AI tutoring guide for UK parents can help you understand where your involvement adds the most value.

Pro Tip: Encourage students to treat AI as a thinking partner rather than an answer machine. Ask them to explain the AI's response back to you in their own words. That single habit builds retention, critical thinking, and confidence simultaneously. Discover more about AI learning companions and how to use them well.

Making AI and human insight work together in practice

Knowing that AI and human input complement each other is one thing. Building a daily routine around that knowledge is another. Here is a step-by-step blueprint you can start using this week.

- Identify the learning goal. Before anything else, a parent or student should define what success looks like for today's session. Vague goals produce vague results.

- Choose the right AI tool. Not all platforms are equal. Look for tools that ask questions and prompt thinking rather than just delivering answers. For exam preparation, personalised A Level support can make a significant difference.

- Engage actively with the AI output. Do not copy and accept. Question it. Try to find a flaw. Ask the AI to explain its reasoning. This is where real thinking happens.

- Add a human feedback loop. After each AI session, spend ten minutes discussing the content with a teacher, parent, or mentor. What was unclear? What felt genuinely understood?

- Adjust and revisit. Use that human feedback to shape the next AI session. This creates a cycle of continuous improvement rather than passive consumption.

- Track progress together. Weekly check-ins between students and parents or tutors help identify gaps that AI alone might miss.

The risk of skipping these human steps is real. Remember, unguarded AI use can lead to a 17% grade drop. That is not a small number. It represents the cost of treating AI as a shortcut rather than a scaffold.

Pro Tip: Review AI recommendations with a mentor at least once a week. Ask your mentor to challenge one thing the AI suggested. That friction is where deeper understanding grows. For more on building confidence through conversation, explore empowering student learning with AI.

A new mindset: Why educational innovation needs both AI and empathy

Here is something that rarely gets said plainly: technology does not transform education. People do. AI is a tool, and like every tool, its impact depends entirely on the hands and minds directing it.

We have seen this pattern before. Calculators did not make students better at mathematics by themselves. Interactive whiteboards did not automatically engage disengaged pupils. The transformative leap always came from a teacher who understood the technology and, more importantly, understood the student.

The next wave of educational innovation will not be decided by which AI platform has the most features. It will be decided by whether educators and families are willing to ask the hard questions. Is this tool building real understanding or creating an illusion of it? Is my child learning to think, or learning to prompt?

Human insight is not a luxury addition to AI-powered learning. It is the foundation that makes it worthwhile. Keeping pace with education trends for 2026 matters, but not more than staying grounded in what learning actually requires: curiosity, challenge, and the confidence to fail and try again.

AI can accelerate all of that. But only if the humans around the student are actively involved.

Find your perfect blend: AI tools with a human touch

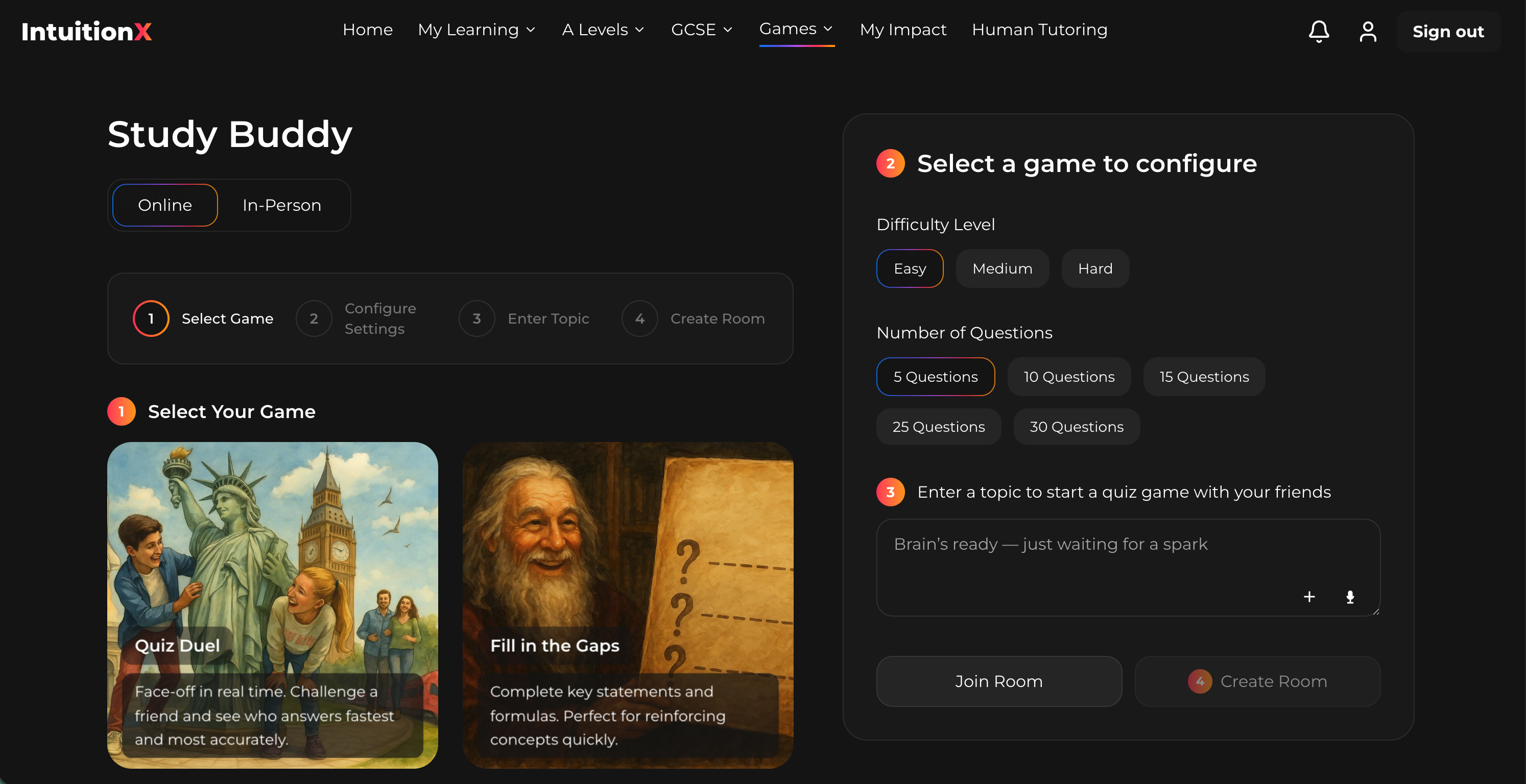

If this article has clarified one thing, it is that the most effective learning happens at the intersection of brilliant AI and genuine human insight. That is exactly what IntuitionX is built around.

Our IntuitionX AI companion combines Oxbridge-level academic rigour with Socratic questioning and memory science, so students are guided to think rather than just receive answers. It is available 24/7, backed by Sir Anthony Seldon, and designed to work alongside the humans in a student's life, not replace them. Ready to experience that balance for yourself? Get started with IntuitionX today and discover what genuinely personalised, human-centred AI learning feels like.

Frequently asked questions

What does 'human insight' mean in AI-driven education?

Human insight refers to the unique ability of teachers, parents, or mentors to provide context, encouragement, and ethical guidance where AI cannot. Experts emphasise that AI augments rather than replaces these essential human roles.

How does human involvement make AI-powered learning more effective?

Human involvement helps AI adapt to real needs, prevents errors from compounding, and keeps learners motivated. Without it, adaptive platforms using incomplete data tend to produce stagnant rather than improving outcomes.

Can AI ever fully replace teachers and parents?

No. Experts agree that AI is a powerful support tool, but the motivation, empathy, and relational trust that teachers and parents provide cannot be replicated by any algorithm.

What are some risks of using AI without human input?

Students who rely entirely on AI tools without human oversight can see a 17% drop in grades, alongside missed context, unchallenged errors, and reduced critical thinking.

How can parents support AI-powered learning at home?

Parents can set clear learning goals before each session, discuss AI outputs with their child afterwards, and provide the encouragement and accountability that technology simply cannot replicate. Their active involvement is one of the strongest predictors of positive learning outcomes.